Online seminar March 21

Trond Engum and Sigurd Saue (Trondheim)

Bernt Isak Wærstad (Oslo)

Marije Baalman (Amsterdam)

Joshua Reiss (London)

Victor Lazzarini (Maynooth)

Øyvind Brandtsegg (San Diego)

Instrument design, software, control

We now have some tools that allow practical experimentation, and we’ve had the chance to use them in some sessions. We have some experience as to what they solve and don’t solve, how simple (or not) they are to use. We know that they are not completely stable on all platforms, there are some “snags” on initialization and/or termination that give different problems for different platforms. Still, in general, we have

just enough

to evaluate the design in terms of instrument building, software architechture, interfacing and control.

We have identified

two distinct modes

of working crossadaptively: The

Analyzer-Modulator

workflow, and a

Direct-Cross-Synthesis

workflow. The Analyzer-Modulator method is comprised of extracting features, and arbitrarily mapping these features as modulators to any effect parameter. The Direct-Cross-Synthesis method is comprised by a much closer interaction directly on the two audio signals, for example as seen with the

liveconvolver

and or different forms of adaptive resonators. These two methods give very different ways of approaching the crossadaptive interplay, with the direct-cross-synthesis method being perceived as

closer to the signal

, and as such, in many ways

closer to each other

for the two performers. The Analyzer-Modulator approach allows arbitrary mappings, and this is both a strngth and a weakness. It is powerful by allowing any mapping, but it is harder to find mappings that are musically and performatively engaging. At least this can be true when a mapping is used without modification over a longer time span. As a further extension, an even more distanced manner of crossadaptive interplay was recently suggested by Lukas Ligeti (UC Irvine, following Brandtsegg’s presentation of our project there in January). Ligeti would like to investigate crossadaptive modulation on MIDI signals between performers. The mapping and processing options for event-based signals like MIDI would have even more degrees of freedom than what we achieve with the Analyzer-Modulator approach, and it would have an even greater degree of “remoteness” or “disconnectedness”. For Ligeti, one of the interesting things is the diconnectedness and how it affects our playing. In perspective, we start to see some different

viewing angles

on how crossadaptivity can be implemented and how it can influence communication and performance.

In this meeting we also discussed problems of the current tools, mostly concerned with the tools of the Analyzer-Moduator method, as that is where we have experienced the most obvious technical hindrance for effecttive exploration. One particular problem is the use of MIDI controller data as our output. Even though it gives great freedom in

modulator destinations

, it is not straightforward for a system operator to keep track of which controller numbers are actively used and what destinations they correspond to. Initial investigations of using OSC in the final interfacing to the DAW have been done by Brandtsegg, and the current status of many DAWs seems to allow “auto-learning” of OSC addresses based on touching controls of the modulator destination within the DAW. a two-way communication between the DAW and ouurr mapping module should be within reach and would immensely simplify that part of our tools.

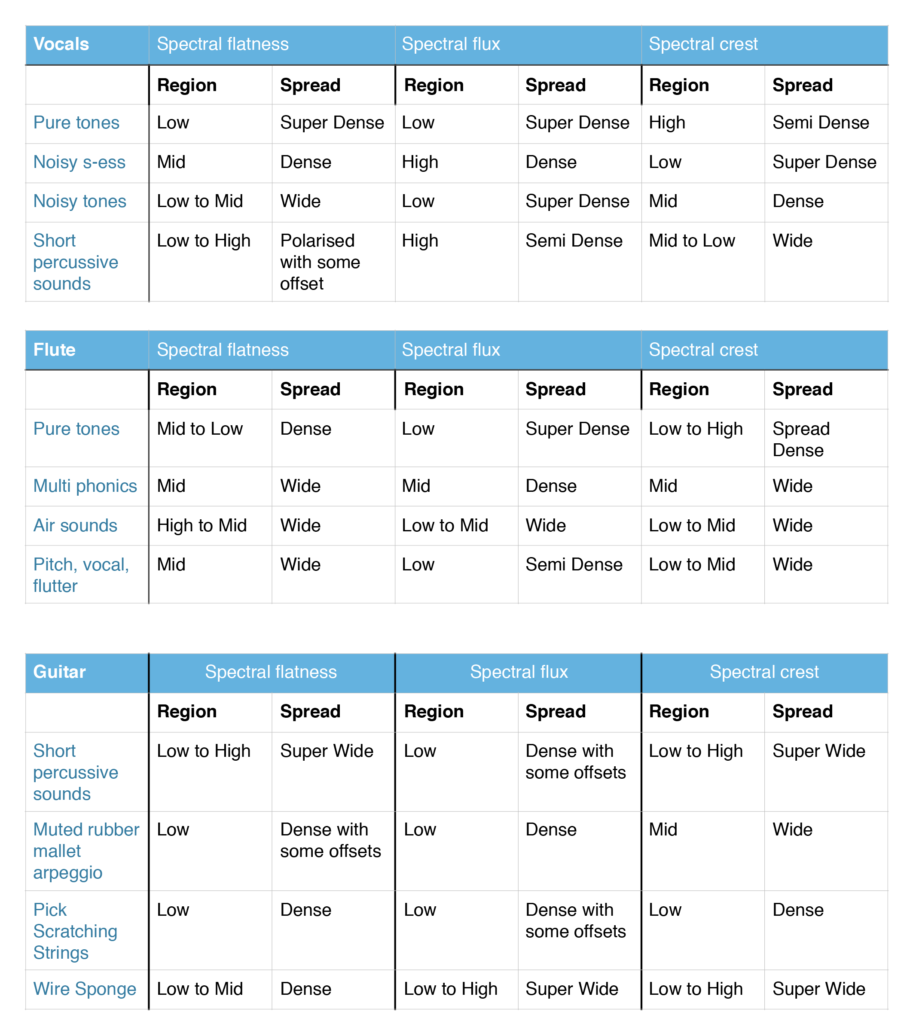

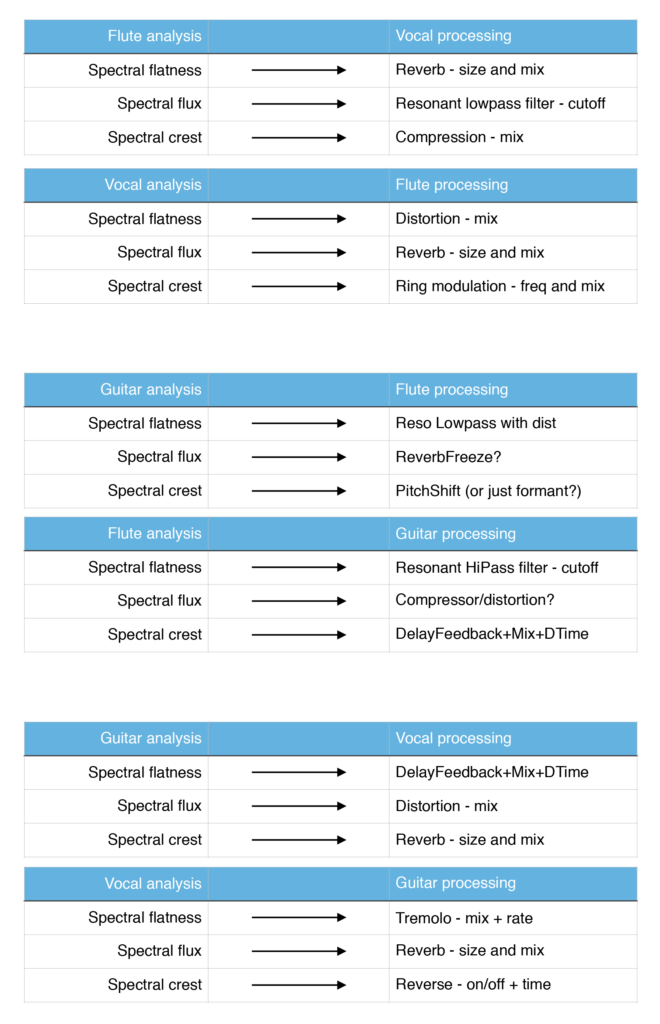

We also discussed the selection of features extracted by the Analyzer, whic ones are more actively used, if any could be removed and/or if any could be refined.

Initial comments

Each of the participants was invited to give their initial comments on these issues. Victor suggests we could rationalize the tools a bit, simplify, and get rid of the Python dependency (which has caused some stability and compatibility issues). This should be done without loosing flexibility and usability. Perhaps a turn towards the originally planned direction of reying basically on Csound for analysis instead of external libraries. Bernt has had some meetings with Trond recently and they have some common views. For them it is imperative to be able to use Ableton Live for the audio processing, as the creative work during sessions is really only possible using tools they are familiar with. Finding solutions to aesthetic problems that may arise require quick turnarounds, and for this to be viable, familiar processing tools. There have been some issues related to stability in Live, which have sometimes significantly slowed down or straight out hindered an effective workflow. Trond appreciates the graphical display of signals, as it helps it teaching performers how the analysis responds to different playing techniques.

Modularity

Bernt also mentions the use of very simple

scaled-down experiments

directly in Max, done quickly with students. It would be relatively easy to make simple patches that combines analysis of one (or a few) features with a small number of modulator parameters. Josh and Marije also mentions

modularity and scaling down

as measures to clean up the tools. Sigurd has some other perspectives on this, as it also relates to what kind of flexibility we might want and need, how much free experimentation with features, mappings and desintations is needed, and also to consider if we are making the tools for an end user or for the research personell within the project. Oeyvind also mentions some arguments that

directly opposes a modular structure,

both in terms of the number of separate plugins and separate windows needed, and also in terms of analyzing one signal with relation to activity in another (f.ex. for cross-bleed reduction and/or masking features etc).

Stability

Josh asks about the stability issues reported. any special feature extractors, or other elements that have been identified that triggers instabilities. Oeyvind/Victor discuss a bit about the Python interface, as this is one issue that frequently come up in relation to compatibility and stability. There are things to try, but perhaps the most promising route is to try to get rid of the Python interface. Josh also asks about the preferred DAW used in the project, as this obviously influence stability. Oeyvind has good experience with Reaper, and this coincides with Josh’s experience at QMUL. In terms of stability and flexibility of routing (multichannel), Reaper is the best choice. Crossadaptive work directly in Ableton Live can be done, but always involve a hack. Other alternatives (Plogue Bidule, Bitwig…) are also discussed briefly. Victor suggests selecting a

reference set of tools, which we document well

in terms of how to use them in our project. Reaper has not been stable for Bernt and Trond, but this might be related to setting of specific options (running plugins in separate/dedicated processes, and other performance options). In any case, the two DAWs of immediate practical interest is

Reaper (in general) and Live (for some performers).

An alternative to using a DAW to host the Analyzer might also be to create a standalone application, as a “server”, sending control signals to any host. There are good reasons for keeping it within the DAW, both as session management (saving setups) and also for preprocessing of input signals (filtering, dynamics, routing).

Simplify

Some of the stability issues can be remedied by simplifying the analyzer, getting rid of unused features, and also getting rid of the Python interface. Simplification will also enable use for less trained users, as it enable self-education and ability to just start using it and experiment. Modularity might also enhance such self-education, but a take on “modularity” might simply hiding irrelevant aspects of the GUI.

In terms of

feature selection

the filtering of GUI display (showing only a subset) is valuable. We see also that the number of actively used parameters is generally relatively low, our “polyphonic attention” for following independent modulations generally is limited to 3-4 dimensions.

It seems clear that we have some overshoot in terms of flexibility and number of parameters in the current version of our tools.

Performative

Marije also suggests we should investigate further

what happens on repeated use

. When the same musicians use the same setup several times over a period of time, working more intensively, just play, see

what combinations wear out

and what stays interesting. This might guide us in general selection of valuable features. Within a short time span (of one performence), we also touched briefly on the issue of using static mappings as opposed to changing the mapping on the fly. Giving the system operator a more expressive role, might also solve situations where a particular mapping wears our or becomes inhibiting over time. So far we have created very repeatable situations, to investigate in detail how each component works. Using a mapping that varies over time can enable more interesting musical forms, but will also in general make the situation more complex. Remembering how performers in general can respond positively to a certain “richness” of the interface (tolerating and even being inspired by noisy analysis), perhaps varying the mapping over time also can shift the attention more on to the sounding result and playing by ear holistically, than intellectually dissecting how each component contributes.

Concluding remarks also suggests that we still need to

play more with it

, to become more proficient, having more control, explore and getting used to (and getting tired of) how it works.